The GPU

While the mainboard was dead as a doornail, the image that showed on the display indicated the power supply, the CRT and the controlling electronics for that were still just fine. If I were to make a server that could keep up the appearance of a still-working Mac, I'd be best off keeping that little CRT working. But where would I get a graphic card that could output 512x342 black&white pixels and that could interface with the Dockstar? There aren't too many options there, so I decided to build my own GPU.

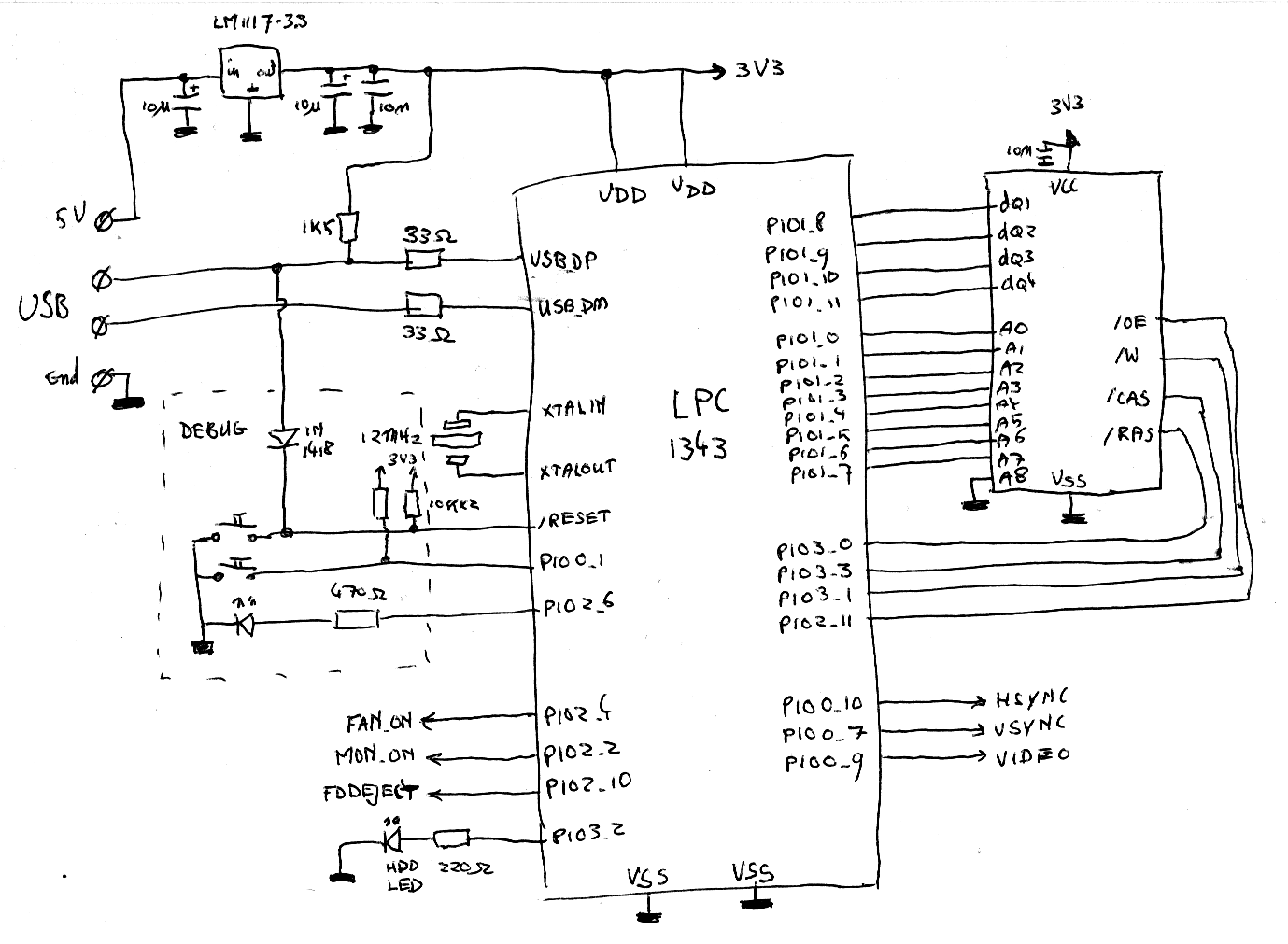

A GPU, eh? First of all, that would mean getting the pixels from the Dockstars memory. The Dockstar only has two interfaces available to get these pixels moving quickly enough: Ethernet and USB. Because of the abundance of USB-ports, the choice to use one of these for the data transfer was an easy one. Second of all, I needed something to output the pixels to the display. I could've used discrete logic or an FPGA for that, but it was likely I'd need a microcontroller for the USB-connection anyway. Besides that, I hadn't done anything yet with the new ARM Cortex-CPUs I recently got, so I decided a 72MHz LPC1343 oughtta be powerful enough to do some pixel-pushing.

The LPC1343 had almost everything I needed: enough flash to store a large program in, USB for the connection to the Dockstar, hardware timers to make the timing to the CRT easier and a SPI-port I could abuse to output pixels to the display without too much CPU overhead. One thing the device didn't have is enough video memory: I needed 512*342=171 KBit of storage for one screen, which is 21KBytes. The LPC1343 only has 8K, so I needed to add an external piece of memory. Because I'm not too fond of soldering many wires, I re-used a GM71C4256A 4-bit DRAM-chip I already knew from my AVR-based CP/M emulator. The 128KB it provided would be more than enough to store a screenfull of pixels.

All in all, I arrived at this schematic: (as usual: click to embiggen)

There really isn't much to say about the schematic because 99% of the heavy

lifting is in the firmware, contained in the LPC1343 in the middle. It is powered

by a LM1117-3.3 linear regulator, which converts the 5V obtained from the USB-connector

to 3.3V, which the LPC can use. Underneath the USB-lines are some buttons and a LED

used to reset the device into the bootloader contained in a small piece of ROM inside

the LPC. This is mostly used for programming and debugging. On the bottom left are

some GPIO ports that I needed for the rest of the peripherials. On the right of

the LPC, you can see the output to the monitor and the DRAM chip, which runs at

3.3V. This actually is out-of-spec (it's supposed to run on 5V) but it works without

problems on this voltage.

The LPC1343 is an LQFP-chip with 64 teeny-tiny pins. While I haven't got too many problems with soldering it, I wasn't going to etch my own PCB, so I put it onto an adapter board and soldered that to a piece of prototyping PCB, like an overgrown DIP chip. In the picture you can see the first stab at building something working. The finished PCB got a lot more populated; more on that later.

The firmware for the device was a bit more tricky than I thought at first. Besides the usual snafus like misplaced letters, misunderstood documentation and general fail on my side, there were a few things that were of a more serious nature. First of all, the bootloader in the LPC13xx/LPC17xx chips. In theory, it's great: you can connect its USB-lines to a PC while keeping a few lines at a certain signal, and voila, an USB-disk appears. Copypaste your firmware binary there and you're essentially flashing the controller... as long as you're under Windows. The Linux-FAT-implementation does some low-level stuff a little different, resulting in wrongly-burned controller... Fortunately, there's still the user-space based ancient little toolset called mtools. Using that, the LPC gets programmed just fine. For reference, if you use a line like

sudo mcopy -o -i /dev/sda firmware.bin ::/ && sudo blockdev --flushbufs /dev/sda

your LPCs should get programmed just fine.

I also had some speed issues. The LPC has no problem pushing the pixels to the display quickly enough thanks to the SPI controller I used, and even with the tedious task of fetching the data from the external RAM first, it ran just fine. Problems started appearing when I wanted to implement the USB-interface to actually make the Dockstar write to display RAM: The ARM still had enough power to actually perform the tasks, but I ran into timing issues. Basically, I couldn't handle the USB-transfers quickly enough to be done before I had to write another line to the CRT, thereby throwing off the timings and introducing many ugly glitches in the image. I solved that my creating a routine estimating the time I had left before I had to write another line, and only processing as many bytes as I could do in that time. The disadvantage was that a routine like that introduces a lot of overhead in switching over the DRAM; in the end I could only upload about 4 full frames per second to the GPU. Luckily, implementing RLE acceleration was already planned from the start, making me only hit the 4FPS worst-case-scenario when the complete screen had to be redrawn.

Apart from firmware, I also needed some software on the PC. My first attempt was an userspace-based tool which could send .png-files to the GPU. It worked fairly well, and I even hacked a basic dithering algorithm into it, just for fun. For instance, here's a few hundred PNGs ripped from an episode of the Simpsons sent to the display as quickly as possible. Notice the varying playout speed as frames can be processed quicker when there's not much change between two images: this is the RLE algorithm working. The images are all black and white; all grays you see are actually dithered pixels clumped together by the camera. There are some artifacts because of a coding error, these got resolved later on.

Later on, I'd changed my mind: it would probably be more challenging but in the end

more satisfying and universal to make a kernel frame buffer driver. This way, when

I would plug in the GPU, the kernel would recognize it and create a framebuffer device.

A framebuffer device is an abstract representation of a graphic card plus display, and

there are a lot of programs which can talk to that: MPlayer, image viewing tools

and even X.org. Getting X.org running on the device made things much simpler:

any program I would want to run, including webbrowsers and Macintosh emulators,

could run on top of that without any modifications to the programs themselves. So I took to

work, and the result was nice to look at: Firefox, running on my workstation,

could render itself to a second X session and into the classic CRT of the Mac.

Ofcourse, I couldn't wait to get a Classic Mac emulator (in this case:

minivmac) compiled so I could take a glimpse

on what the machine would eventually look like: